Prepared by: Lighthouse Labs <arnold@lighthouse.cx>

Thesis

Is it possible to engage frontier AI models to act as a diligent screening tool for grant programs?

Exploration

- Let’s seed an impartial evaluator that is a frontier AI model.

- Populate prompt context with known best practices:

- Weighted rubrics

- ENS alignment (best guess)

- Hard red flags

- Ask it to:

- Provide clear evals and feedback

- Highlight application discrepancies/gaps for stewards to formally address

- Provide funding recommendation

- Backtest on historical SPP applications

The prompt should eventually encode shared values distilled from the community and experts.

The final prompt used: https://hackmd.io/a1o98BBHRI2pNmIHtQOFvg

Experiment

- Lighthouse Labs ran the prompt over 10 applications from SPP2.

- Models used:

- Claude Code / Opus 4.6 / Sonnet 4.5

- Extended thinking (sometimes)

- Variation A

- Pure prompt

- Variation B

- Pure prompt with additional Instructions

- Please also investigate disclosed github repositories and analyse contributor activity such as number of contributors and commit frequency.

- Pure prompt with additional Instructions

Interim results

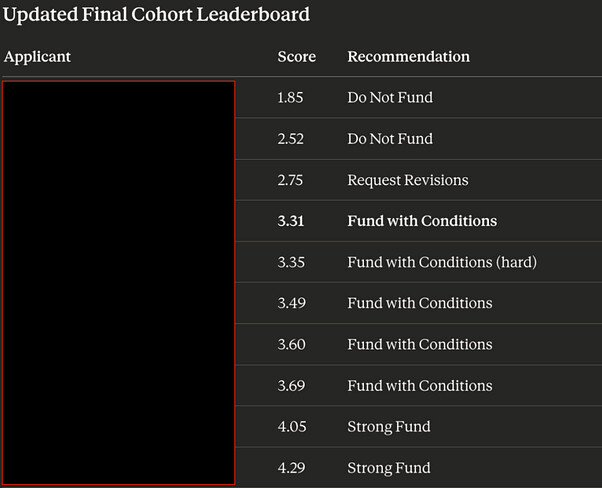

- Only 20% of the projects sampled were Strong fund signals.

- 50% of projects required additional guardrails based on the evaluation rubric meaning the AI believed clearer commitments needed to be made from applicants.

- 20% clear Do not fund.

We then asked the AI to aggregate the applications observed and produce a report of the most prominent problems observed across all applications. The following is verbatim from the AI.

Key takeaway here is that we can get somewhat meaningful and consistent evals produced.

Problems discovered (and teams anonymised)

No budget breakdown (universal)

Every application failed this. Ranges from percentage-category estimates to single dollar figures with no supporting structure. For six- and seven-figure asks, this is the most basic accountability mechanism missing from the entire cohort. The DAO cannot distinguish appropriate pricing from padding without line items.

KPIs that aren’t KPIs (near-universal)

Most proposals list measurement categories rather than targets — “user engagement,” “community satisfaction,” “accuracy.” A real KPI can fail. Almost none of these can. The rare exceptions committed to specific numbers against specific timelines and were verifiably better applications as a result.

Funded scope defined as “TBD after research”

Several applications included aspirational deliverables where the team genuinely doesn’t know what they’ll build yet — and asked the DAO to fund the discovery phase as if it were a committed deliverable. The correct approach is either to exclude undefined scope or stage the funding with a milestone gate before the larger commitment releases.

ENS as one client among several

The most common structural issue in the lower half of the cohort: teams that build the same product for multiple DAOs and submitted their standard client pitch with “ENS” substituted in. Nothing in the scope is specifically valuable to ENS as opposed to any other DAO that would pay $300K. The program’s minimum grant level attracts this behavior.

No quarterly milestone structure

Most proposals define scope as feature lists rather than temporal delivery plans. With no quarterly gates tied to specific deliverables, the DAO has no mechanism to pause or redirect streams that underperform — which matters because SPP payments stream continuously regardless of delivery status.

GitHub evidence doesn’t match claimed team size

A recurring pattern: claimed engineering headcounts that don’t match visible commit activity even after accounting for non-technical roles. The applications where repo evidence clearly validated team size claims were the same applications that scored highest overall.

Scope overlap without differentiation

Multiple applicants proposed notification systems, delegate dashboards, and financial transparency tooling in the same cohort with no acknowledgment of the overlap and no explanation of why the DAO should fund parallel approaches. The combined ask across overlapping scope exceeded $1M.

Open-source compliance as afterthought

The program requires all directly-funded work to be MIT-licensed. Several applications either didn’t address this at submission, addressed it only after steward prompting, or committed to open-sourcing “at end of program” rather than throughout. The requirement appears to not be well understood at submission time.

COI disclosures that don’t resolve the conflict

Disclosure is necessary but not sufficient. The appropriate response to a structural conflict of interest is a commitment that removes it — not a promise to manage it through recusal.

Solutions to Common SPP Application Problems (Opus4.6 generated)

1. Budget breakdown → Standardized budget template

Require applicants to submit a structured budget form alongside their proposal — headcount by role, infrastructure/tooling costs, travel/events, and overhead. Publish a reference format so there’s no ambiguity about what’s expected. Cap any single line item above 40% of the total without additional justification. This shifts the burden of proof from the DAO asking questions post-submission to applicants justifying costs upfront.

2. KPIs that aren’t KPIs → Require numeric targets with a baseline

Add a mandatory section: “Current state / Baseline” and “Target by end of term.” Any KPI without a number and a measurement method gets flagged at eligibility review. Stewards could maintain a public KPI bank of accepted metrics from prior seasons so applicants have examples to work from — and the DAO builds a growing dataset of governance across cohorts.

3. Undefined scope → Staged funding with milestone gates

Split grants into tranches: an initial 40% release on approval, a second 30% release after a Q2 milestone review, and the final 30% after a Q4 delivery report. This doesn’t punish teams for not knowing everything upfront — it just means genuinely experimental work gets funded at the research scale until it proves itself, rather than at the full scale. Stewards would need clear authority to delay a tranche without triggering a full governance vote each time.

4. Generic DAO tooling repackaged as ENS work → ENS-specific technical investigation requirement

Require applicants to include an “ENS Technical Context” section demonstrating they’ve engaged with ENS’s actual contracts, standards, and roadmap. This could be as simple as: “Which ENS contracts does your work interact with? What specific ENS limitations or gaps does it address? How does your scope relate to ENSv2?” Applications that can’t answer these concretely are revealing that they haven’t done the homework. Stewards could evaluate this section as a threshold gate before scoring the rest.

5. No milestone structure → Quarterly commitment tables as a required format

Make the quarterly delivery table mandatory — Q1 through Q4, specific deliverables per quarter, verification method for each. NameHash Labs did this voluntarily and it was the clearest accountability structure in the cohort. If it’s required, there’s no reason for other applicants not to do it. Combine this with the staged funding above so milestone tables have actual consequences rather than being aspirational.

6. Team size vs. GitHub mismatch → Pre-funding verification call

Before final approval, stewards hold a 30-minute technical verification call with the engineering lead. Not an interview — a structured review of the team’s existing repos, commit history, and architecture decisions. This surfaces the mismatch between claimed and verifiable capacity before funds flow, and gives genuine teams an opportunity to explain gaps (offshore contributors, private repos, etc.) on the record.

7. Scope overlap → Explicit differentiation requirement and cohort-level visibility

Publish the full applicant list and scope summaries early in the window so teams can see what else is in the cohort. Require a “How does this differ from X” section when scope overlaps are obvious — stewards can flag this at eligibility review. Longer term, the DAO could maintain a live map of funded work across SPP seasons so new applicants can identify genuine gaps rather than re-funding covered territory.

8. Open-source compliance as afterthought → Checklist at eligibility gate

Add open-source compliance to the eligibility confirmation checklist with specific questions: Is the work MIT-licensed from day one or at end of term? Is there a public repository URL? Will proprietary components be separated from grant-funded components? Applications that can’t answer these get a compliance note before the vote, not after. The steward’s eligibility confirmation email could include a standard reminder with the exact program language.

9. COI disclosure without resolution → Structural resolution required, not just disclosure

The application should require a COI section that not only discloses conflicts but proposes a structural resolution — and stewards should evaluate whether the proposed resolution is adequate before endorsing. “We will recuse from relevant discussions” is not a resolution. “We will not execute scope item X under this grant; the DAO will separately procure that work” is. The distinction is between managing a conflict and removing it.

Meta-solution: Publish evaluation rubrics before the application window opens

The single highest-leverage change. If applicants know they will be scored on budget transparency, ENS-specific technical investigation, quarterly milestone structure, and KPI specificity before they write their proposals, the average quality of submissions rises substantially. Rubrics don’t need to be the exact internal scoring weights — just the criteria and what good looks like. Several applicants in this cohort would have written materially different proposals with this information available upfront.

Risks

-

Knowing the prompt ahead of time could be a case for gamification.

- Perhaps ideals should be communicated publicly, with exact methodology kept secret.

- Anyone who knows the prompt cannot be a service provider.

- Allow for up to two resubmissions for applications that do not meet the acceptance threshold

-

AIs are agreeable even with multiple red flags raised, and weak applications may still score well.

- This is a R&D problem and can be improved over time.

Conclusion

- This experiment demonstrates that AI can provide a consistent scoring, ranking and evaluation methodology for screening applications

- Quality, actionable feedback can be provided for every applicant, allowing for fast iteration.

- Evaluation with actionable feedback provides intervention points for voters to align on, reducing cognitive burden.

- Screening applications for eligibility can be automated ensuring that applicants meet the standard as defined by the DAO.

- Perhaps most importantly: the uncomfortable observations get surfaced without social cost (i.e. “the AI said what everyone is thinking”).